As cyber professionals, we are all aware that there is a myriad of cyber frameworks, models, and standards from which organizations can choose to align their cyber programs. As a cyber risk consultant within U.S. critical infrastructure, I am regularly asked about the differences and organizational advantages between, say, a framework and a model, a reasonable question with an easily digestible answer (discussed below). What is troubling, however, is that organizations seek this knowledge to enable a singular decision: which framework, model, or standard (or best practice) shall the organization choose to align too? What is lost in this search is that this should not be an align-to-one-and-done singular search.

Understanding and appropriately applying the intended use case of each document type is essential to ensure organizational alignment and the proper and complete performance of the organization’s cyber strategy. Though it is not often discussed, it is equally important to understand that each of these document-types— frameworks, models, standards, and sector-specific standards— each carries an assumption for appropriate application within the organization’s own hierarchy. This assumptive prescription, as the wording implies, is far more likely to be implicit than explicit within the pages of these documents. It also fails to recognize the behavior of organizations to seek and align to a single document, regardless — or in ignorance— to the chosen materials stratified applicability. This article will discuss the normative (optimal) flow of cyber documents and discuss the perils of the implicitness within them.

Note: I am not aware of a convenient way to reference the whole cyber document family – framework, model, standard, and industry- or sector-specific standard. For the purposes of this article, capitalized plural Cyber Standards or Standards will refer to this suite of documents where the lowercase standard will refer to cyber standards, themselves (e.g., NIST SP.800-53 standard). Sector standards shall remain unchanged. This is not a preferred schema, so if anyone knows of a lexical norm, please comment below.

Complementarity Conflict of Normative Knowledge (and Mappings)

- A normative model (model, in social science usage) answers – what is the optimal way to solve a problem.

- A descriptive model (sometimes known as a process model, in cybersecurity, the use of prescriptive, as-in prescriptive controls, is synonymous) answers – how should this problem be solved, i.e., what is the optimal solution given specific constraints.

Commonly, it would be enough to just define and differentiate these Cyber Standards, as, once established, the implied expectation is that an organization can appropriately implement each of the interplaying Cyber Standards. This expectation, however, carries an assumption of knowledge. That is the ability of an organization to delineate the normative from the descriptive portions of the Standard. The premise also ignores the typical organizational behavior of choosing and aligning to a single document, such as NIST CSF or the DOE’s Cybersecurity Capability Maturity Model (C2M2).

The term “organizational alignment” refers to the chosen document that is used to guide the creation and implementation of the overall cyber program. Additionally, organizations generally perform self- or third-party assessments against this aligning document. That’s all a good thing, right? Right? You bet it is! The peril comes when organizations fail to define and align to Standards up- or down-chain of this umbrella framework or model. For brevity of this article, let my forthcoming strata-coordination statement on the practical inadequacy of document mappings serve as a placeholder for its deservedly longer topic. Suffice to say, having a single Omni-aligned document can quickly result in confusion, misuse, and underutilization that can result in overconfidence and cause program and control gaps.

This implied expectation of knowledge carries within it a tacit duality within each document: they serve as both a normative and descriptive model. That is, they represent the optimal theory and the prescription of application. This duality, regardless of its formal acknowledgment within the document, results in a Standard’s fallacy of knowledge and application. Knowledge of 1) the intended application of the document as generally outlined in the document front-matter and 2) the ability to coordinate the flow of materials within their use-case hierarchy.

As a normative model, each Standard-type, with few exceptions, myopically describes its intended usage, thus fulfilling the first knowledge requirement identified above. However, due to their isolated creation, assumption two, the interplay and hierarchical application are rarely documented (for reasons we will soon see, a mapping does not serve as strata-coordination). When hierarchical recognition is given, it is never with the sufficient and practical detail one would expect from a document serving as both a normative and descriptive model (such as Bayes or Pythagorean). This holds true for Standards from the same Standards Development Organization (SDO), where inter-document coordination could be readily facilitated.

The sole tacitness of Standards interplay is provided by controls mapping. All credible Standards supply a mapping the connects, say, a subcategory from NIST Cybersecurity Framework (CSF) to practices or controls found in its lateral, ascending, or descending hierarchical documents. Though beyond the scope of this article, it is worth noting that these mappings rely on one-to-many mappings that are intra-document-non-unique. This is especially egregious in the higher tier documents as they generally provide higher-level guidance, thus requiring multiple mappings to their lower-tier practice- and control-counterparts. The result is a complementarity conflict, thus furthering the Standards fallacy of knowledge and application.

The Optimal Interplay of the Cyber Standards Hierarchy

Cyber Standards have a reasonably agreed upon normative taxonomy. (ok, maybe axiomatic is more accurate, but I digress). Additionally, each level of the taxonomy has a generally defined intended use case. (Axiom, again providing convenient wiggle against the rigor of a formal normative theory). Let’s explore each level of the taxonomy from an optimized (normative) state of hierarchical interplay.

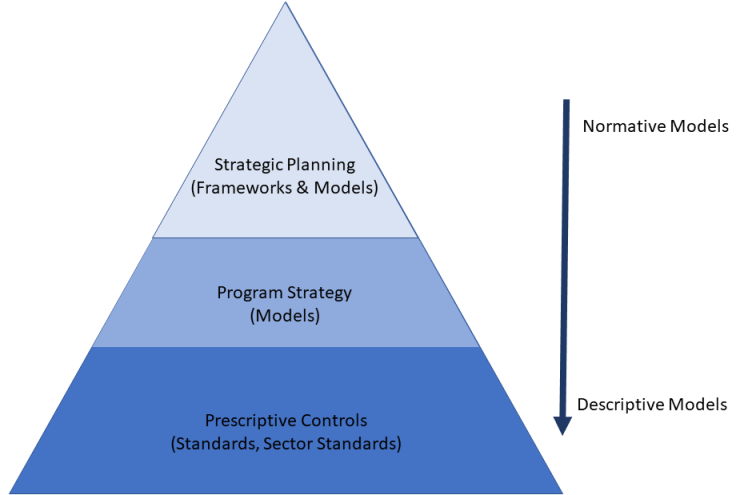

As Figure 1 illustrates, one can think of the Cyber Standards hierarchy (levels) like a pyramid. At the top of the pyramid are the high-level, typically organizationally focused frameworks. Models are more robust in their guidance than frameworks. While they provide more detail than frameworks, most shy away from providing prescriptive controls. Standards, both general and sector, provide prescription; as descriptive models, they answer the question of what specifically are the needed controls.

I like to describe the idealized utilization and interplay between frameworks, models, and standards as follows. For example purposes, I will use the terminology found in the NIST Cybersecurity Framework (CSF), Cybersecurity Capability Maturity Model (C2M2), and the NIST SP.800-53 standard.

A Frameworks provide high-level, cyber-related topics, or functional areas, broken down into categories and subcategories that executives should evaluate for appropriateness within their unique organization. Once the applicable subcategories are identified, they can be used as the outline in the development of an organizational cyber strategy. Frameworks provide a means to validate a strategy to ensure against strategic gaps

Leveraging the frameworks subcategories, which are now codified into corporate strategy, a Model provides the programmatic practices that, once evaluated for environment-appropriateness and implemented, ensure that the strategic intent of a framework’s subcategories can be met. Models provide a means to validate a functional area to ensure against program-practice gaps.

Standards provide prescriptive controls that, once implemented, ensure that the programmatic intent of a model’s practices can be met. Standards provide a means to validate against control gaps within a practice area.

As we can see, frameworks, models, and standards each have their own unique and vital role to play in organizational cybersecurity planning, implementation, and execution. Looked at cohesively, as we did above, it is evident that this is the natural and intended flow. Yet, in my anecdotal experience, I rarely see each document-type utilized within an organization, and when I do, there is only nascent (if any) departmental coordination much less organizational alignment.

Discussion

The real sin of any Cyber Standard is that it is not enough to develop it to be a normative model when that model is created within SDO-isolation. Say nothing of the stateful duality that is the identity crisis caused when documents waffle between normative and descriptive! We, as an industry, create, and we, as practitioners, accept Standards that have no formal grounding in established and accepted theory. Instead, we write and develop Standards and release them as floating free radicals that attach themselves to gaps in organizational strategy. With no coordinated nucleus of applied theory, these free radicals are equally likely to result in organizational overconfidence as they are organizational maturity. As the following section will show, cybersecurity can learn much from well-established social science theories.

Expected Utility Versus the Behavioral Reality of Cyber Standards

If there were to be a core premise of organizational cybersecurity, it would be the reduction of risk, specifically cyber risk. The unifying theory might state that organizations choose risk reduction strategies through optimization. That is, selecting the optimal risk reduction strategy given organizational constraints, such as budget, staffing, regulatory requirements, etc.

As I continue in my career as a cyber risk consultant, what I see is that organizations do not perform constrained optimization. Optimization relies upon the organization to have rational expectations not only within the agency of its employees but also for the organization itself. Heuristically, the primary constraint within an organization is likely bureaucracy as it is undoubtedly a constraint that all organizations must recognize. However, few are capable of identifying entropy, specifically cyber risk entropy, the ever-increasing complexity of their systems and network that can prevent cyber strategy state change.

The poison to the employee-as-rational-agent is likely a combination of ignorance and the organizations’ own entropic poison-pill.

For the astute reader, You might be having flashbacks to your college economics courses right about now. This is because constrained optimization and rational (or bounded) expectations along with expected utility theory (how to make decisions in risky situations) form the normative model of modern economic theory: Optimization. In other words, if a person were to make every decision based on what is in their best financial interests, they would employ the normative model of optimization. However, as Nobel prize-winning economist, Richard Thaler points out in his book Misbehaving, humans do not always behave in their own best interests. This is represented in his differentiating between homo sapiens and homo economicus or Humans and Econs, for short.

“Jason, Jason, Jason.” I can hear you saying. “Here you go again, man. Mucking up another fine article with your waffling. Get to the damn point already, will ya!”

Ok, fine…geez-o-Pete.

The normative flow of Cyber Standards, as described in the section above, assumes that organizations and their people optimize their cyber risk reduction activities, thus their cyber strategy. And they do this by employing the stratified yet charmingly cohesive, framework-model-standard-flow.

Homo Cyberus, We Are Not

Behavioral economics (“Oh god, here he goes again!” – no, just hear me out!). Behavioral economics recognizes that normative models work for Econs, but not for Humans. We are, after all, not (always) rational. Similarly, behavioral cybersecurity prospects the theory that normative, that is optimized, organizational risk reduction models, aka Cyber Standards, are not only impractical but fail to recognize the reality of all but the tiniest fraction of cyber-aware organizations. Now, I am not making the argument that Standards need to change; having normative models are an important tool to guide the progression of cyber-embetterment. What I am arguing against is the industry’s dogmatic adherence to the gum-flapping that what makes for a good normative model ipso facto makes for good/practical/implementable prescription.

Homo Cyberius, we are not!

There is much more that should be said on this topic, but I will save it for another article.

“Why,” No one is asking.

“Because I am tired now and want to go home, but here is my number. Maybe we could go out for coffee sometime?”

“Great, or maybe we could get together and just eat a bunch of caramels.”

“What?”

“When you think about it, it’s just as arbitrary as drinking coffee.”

“Okay, sounds good.”

Until next time, intrepid risk managers!

Notes

“Normative taxonomy”: More appropriately, this Standards taxonomy is more axiomatic, as, to my knowledge, there are no universally accepted, documented, and bounded, definitions that are needed to support a normative model. My use here and immediate parenthetical correction of normative is, admittedly, a flaunting waggle to the lack of rigor within Cyber Standards in general, and the industry as a whole.

“generally defined”: While the hierarchy can be said to have a normative structure, not all Standards development bodies adhere to a taxonomic use case.

“Cybersecurity Capability Maturity Model”: While I used the C2M2 as a generic example of a cyber model, I would like to point out that the C2M2 is actually a maturity model. A maturity model not only encourages organizations to select the appropriate practices but also the level of the desired maturity for those practices. The C2M2 uses the FiLiPiNi (phil-a-pee-knee) scale: Fully-, Largely-, Partially-, and Not-Implemented.

“Misbehaving”: Richard Thaler, Misbehaving (New York: W.M. Norton, 2015)

“Homo economicus”: Thaler p.4.

“Prospects the theory”: Hehehehe, prospect theory, get it? A Kahneman & Tversky easter egg!

Leave a comment