Data Compatibility: Understanding the Measurement and Metrics Implications of Developing and Updating a Cyber Framework

Misbehaving: Expected Utility Versus the Behavioral Reality of Cyber Standards

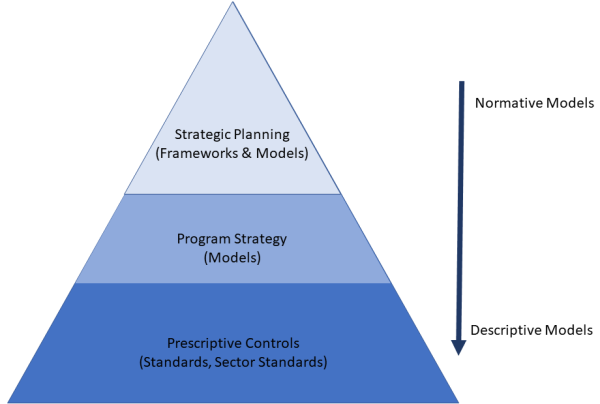

If there were to be a core premise of organizational cybersecurity it would be the reduction of risk, specifically cyber risk. The unifying theory for such a premise might state that organizations choose risk reduction strategies through optimization. That is, choosing the optimal risk reduction strategy given organizational constraints, such as budget, staffing, regulatory requirements, etc.

Knightian Uncertainty: What Rumsfeld’s Unknown Unknowns Teach Us About Cyber Risk Management

True uncertainty is found in those stochastic and isomorphic events that take an organization by surprise; asymmetric to cyber strategy. It was true uncertainty that Rumsfeld was referring to as “unknown unknowns.” And it is from this same Knightian percentage that the next zero-day event will appear.

Should Cyber Risk Likelihood Be Quantified?

What can the 1945 Hungarian Mathematician, George Polya teach us about cyber risk quantification?

What Does Schrodinger’s Cat Have To Do With Cyber Risk?

Bob Odenkirk's 'A load of Hooey' What can cyber risk management learn from quantum theory? Are there similarities or shared challenges that both studies face? After all, they are largely esoteric, they seek to quantify the seemingly impossible, and both are faced with the sad fact that those that espouse their respective practices are often maligned... Continue Reading →

5 Types of Risk Response, Yes, Five!

The hidden disposition of Risk Ignore has its roots in the organizational difficulty of applying existing ERM practices equally across a complex organization.

Lacking 2 cyber controls lead TalkTalk to lose 150k customers in just 3 months

On 21 October 2015 TalkTalk, a major UK telecommunications provider with over 4 million customers, suffered what it called a “significant and sustained cyber attack”.